Abstract

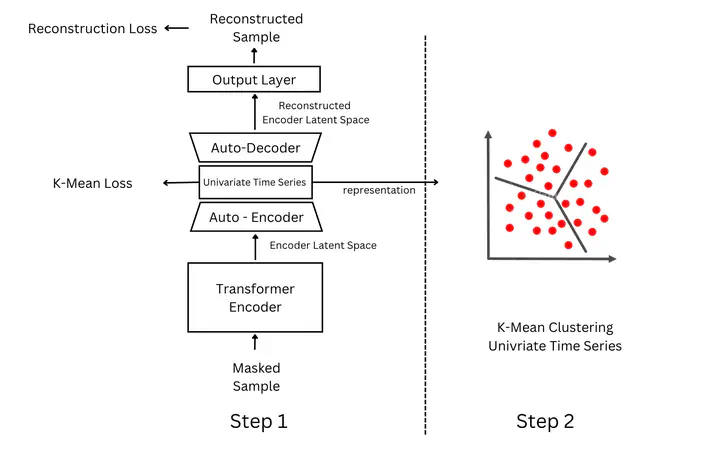

Multivariate Time Series (MTS) Clustering, entailing the grouping of samples exhibiting similarity across multiple temporal variables, holds significant promise for various applications. However, prevailing clustering algorithms often encounter challenges such as noise within raw time series data, diminished feature correla- tion, and necessitated human preprocessing. In response, this paper introduces a novel framework for MTS clustering utilizing Transformer architecture, leveraging its multi-head attention mechanism to potentially capture intricate multivariate relationships. The proposed approach entails learning a cluster-oriented univariate representation of MTS using a Transformer before applying clustering algorithms. The efficacy of this novel framework is substantiated through a series of experi- ments conducted on real-world datasets.

Keywords

Multivariate Time Series, Clustering, Transfomer, Representation Learning, Deep Learning

Paper

Please use the link above to access the full paper (Note: this paper is not published and not peer-reviewed)